Image from traffic camera

Despite the widespread deployment of outdoor cameras, their potential for automated analysis remains largely untapped due, in part, to calibration challenges. The absence of precise camera calibration data, including intrinsic and extrinsic parameters, hinders accurate real-world distance measurements from captured videos.

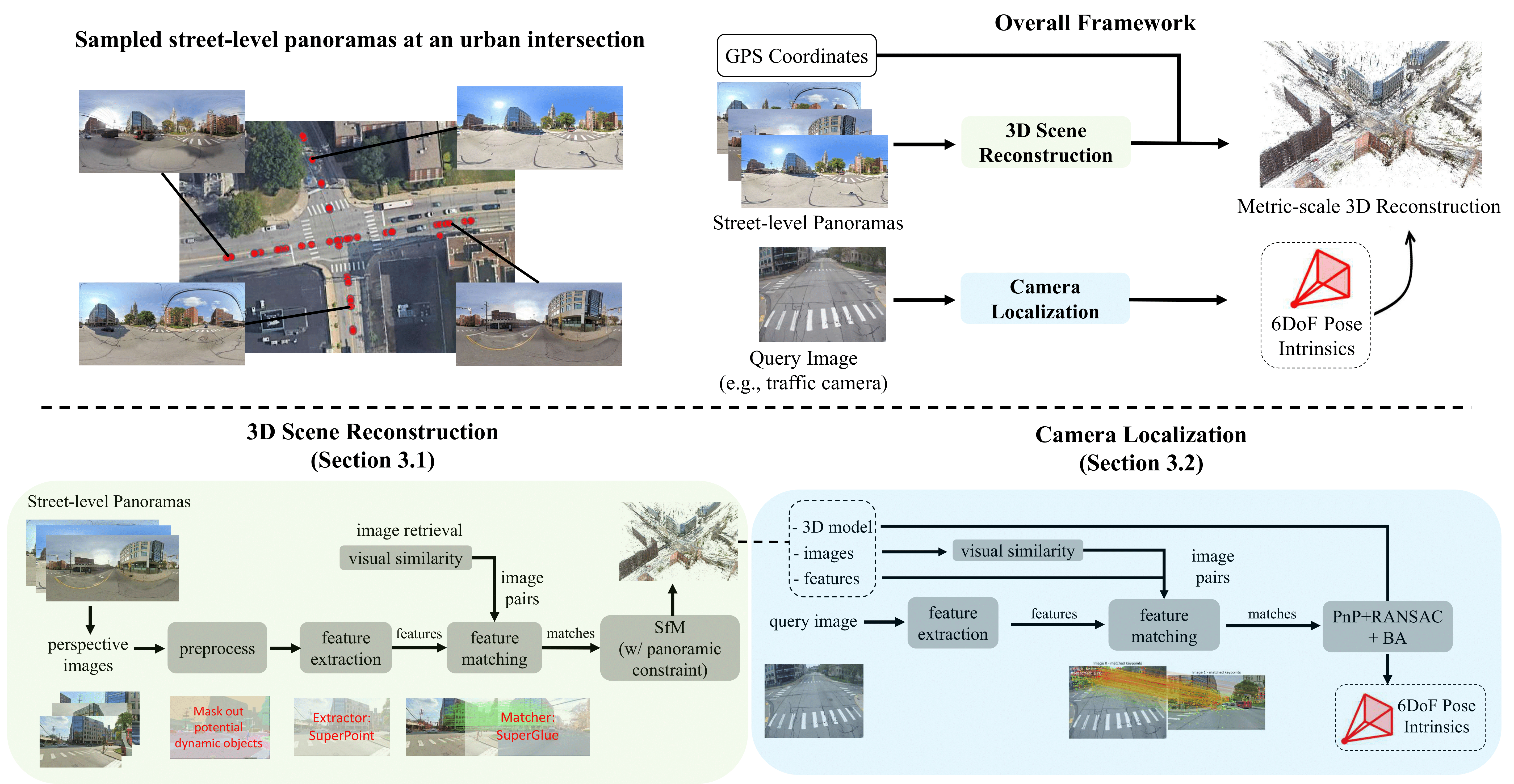

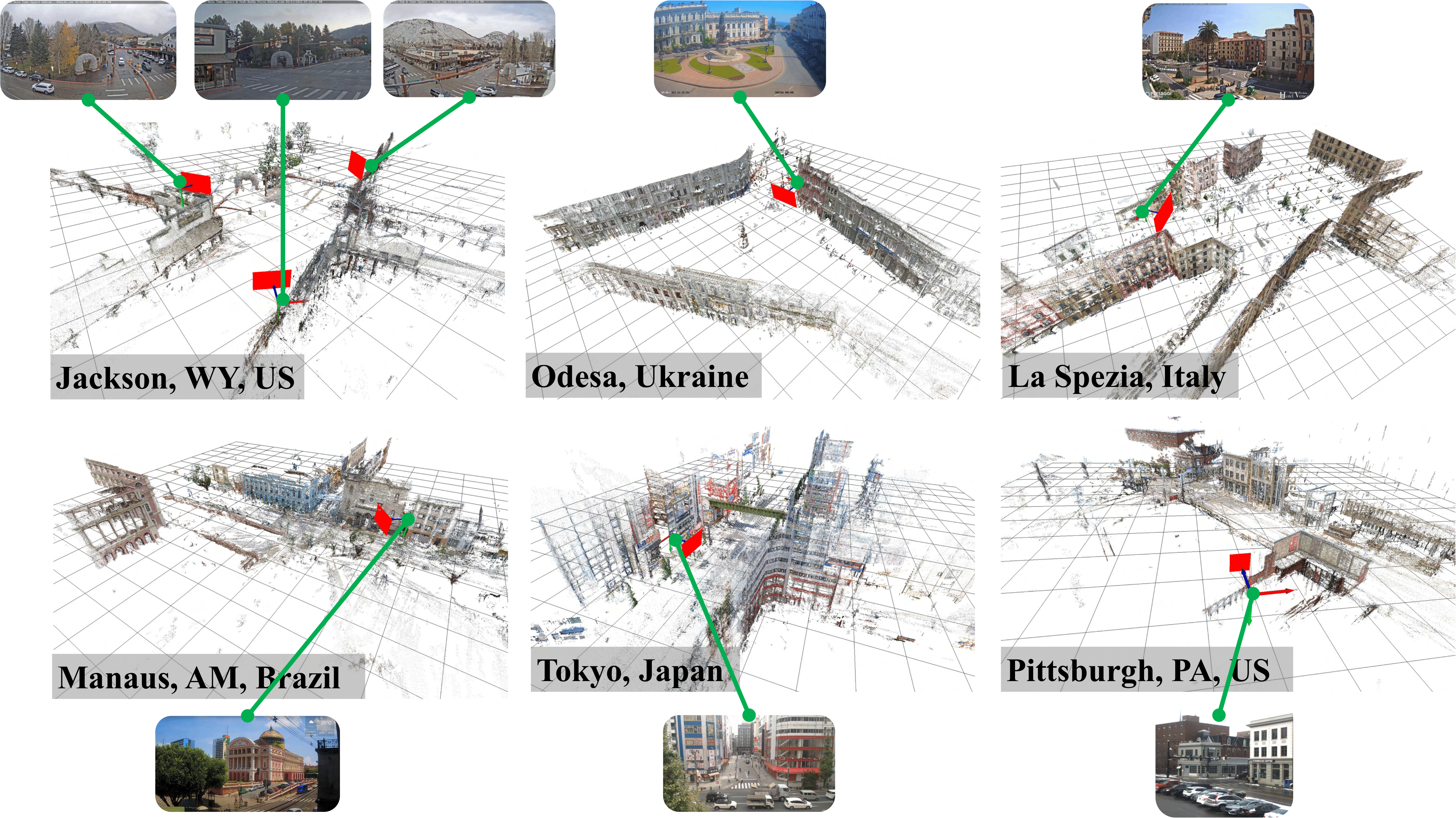

To address this, we present a scalable framework that utilizes street-level imagery to reconstruct a metric 3D model, facilitating precise calibration of in-the-wild traffic cameras. Notably, our framework achieves 3D scene reconstruction and accurate localization of over 100 global traffic cameras, and is scalable to any camera with sufficient street-level imagery.

For evaluation, we introduce a dataset of 20 fully calibrated traffic cameras, demonstrating our method's significant enhancements over existing automatic calibration techniques. Furthermore, we highlight our approach's utility in traffic analysis by extracting insights via 3D vehicle reconstruction and speed measurement, thereby opening up the potential of using outdoor cameras for automated analysis.

Image from traffic camera

3D reconstruction (traffic camera in red)

@inproceedings{vuong2024trafficcalib,

title = {Toward Planet-Wide Traffic Camera Calibration},

author = {Vuong, Khiem and Tamburo, Robert and Narasimhan, Srinivasa G.},

booktitle = {IEEE Winter Conference on Applications of Computer Vision},

year = {2024},

}

Potential Societal Impact and Data Licensing

We do not perform any human subject studies from these cameras. To preserve the privacy of the object captured in the images, we blur the faces and license plates in all the images to be released. This study is designated as non-human subjects research by our Institutional Review Board (IRB).

We do not release or use Google Street View data for commercial purposes. For downloading and using the data on your own, it's important to follow the Google Street View Attribution Guidelines.

Acknowledgements

This work was supported in part by an NSF Grant CNS-2038612, a DOT RITA Mobility-21 Grant 69A3551747111, and Intelligence Advanced Research Projects Activity (IARPA) via Department of Interior/ Interior Business Center (DOI/IBC) contract number 140D0423C0074. The U.S. Government is authorized to reproduce and distribute reprints for Governmental purposes notwithstanding any copyright annotation thereon. Disclaimer: The views and conclusions contained herein are those of the authors and should not be interpreted as necessarily representing the official policies or endorsements, either expressed or implied, of IARPA, DOI/IBC, or the U.S. Government.